There’s no surprise that real-time systems have become a core requirement for numerous SaaS platforms, fintech infrastructure, trading dashboards, collaborative tools, analytics and other products. Node.js is the center of this evolution—it enables high-performance event-driven systems capable of handling thousands to millions of simultaneous connections.

For US-based SaaS companies, building a reliable real-time SaaS architecture means more than just adding WebSockets. It requires a scalable backend strategy, event-driven communication, and cloud-native infrastructure design. Real time app development node js is a strategic investment for modern SaaS platforms.

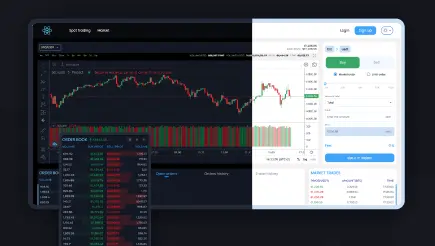

Across multiple fintech and blockchain projects, Peiko has been building systems where low-latency data delivery is critical. Solutions such as trading dashboards, crypto exchange engines, and liquidity aggregation platforms rely on continuously updated streams of information rather than traditional request–response cycles.

In practice, these types of systems typically combine:

- Node.js event-driven architecture for handling concurrent connections efficiently,

- WebSocket-based communication for real-time client updates (e.g., price feeds, order books, trade execution status),

- Streaming data pipelines to process high-frequency market or transactional events,

- API orchestration layers to integrate multiple external data sources and services.

For example, in trading and exchange-related platforms, users expect instant updates to balances, orders, and market movements without page refreshes. This requires a backend capable of pushing updates in real time while maintaining scalability under high load conditions. This is why companies investing in real time app development node js gain a significant competitive advantage in modern SaaS markets.

In this article, we want to break down how to design, build, and scale production-grade real-time systems using Node.js, WebSockets, streaming pipelines, and event-driven patterns.

Node.js WebSocket Server Architecture Explained

A node js websocket server provides persistent, bi-directional communication between client and server. Unlike REST APIs, WebSockets remove the need for constant polling, allowing data to be delivered instantly and reducing latency significantly.

This makes WebSocket-based systems essential for applications such as trading platforms, live dashboards, multiplayer systems, chat applications, and collaborative tools where immediate synchronization is critical. A node js websocket server must be designed for horizontal scalability and efficient connection handling.

A node js websocket server must also integrate with distributed messaging systems to support real-time synchronization.

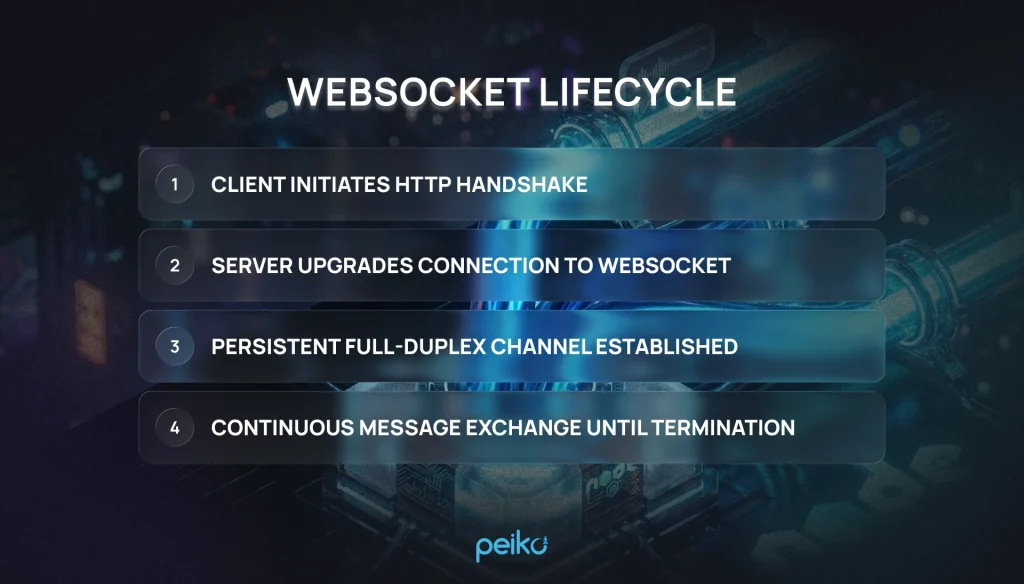

WebSocket Lifecycle

This lifecycle enables real-time applications such as trading platforms, chat systems, and live dashboards, where immediate data synchronization is essential.

Efficient connection management is critical for scalability in WebSocket-based systems. Unlike stateless HTTP requests, each WebSocket connection remains active and continuously consumes server resources.

Connection Handling

To handle this effectively, production systems typically:

- Maintain a connection registry to track active clients.

- Use in-memory stores or distributed caches to manage connection metadata.

- Implement cleanup mechanisms to remove stale or inactive connections.

Without proper management, high numbers of concurrent connections can quickly lead to memory pressure and decreased performance.

Authentication

In production-grade systems, WebSocket connections are secured during the initial handshake by validating authentication credentials before the connection is established. A common approach is JWT-based authentication, where the token is verified at the upgrade stage to ensure only authorized clients can initiate a persistent connection.

This approach is critical in fintech, trading, and enterprise systems where real-time data access must be strictly controlled and tied to authenticated user sessions.

Horizontal scaling

Scaling WebSocket infrastructure in Node.js introduces additional complexity compared to traditional REST APIs. Since connections are stateful, multiple server instances must share session information to remain synchronized.

Common scaling strategies include:

- Using Redis Pub/Sub to synchronize events across instances.

- Leveraging message brokers like Kafka or RabbitMQ for event distribution.

- Implementing a load balancer with sticky sessions when necessary.

These approaches ensure that messages reach the correct connected clients regardless of which server instance handles the event.

WebSocket vs REST vs SSE

| Feature | WebSockets | REST API | Server-Sent Events (SSE) |

| Communication | Bi-directional | Request/Response | One-way (server → client) |

| Latency | Very low | Medium | Low |

| Scalability | High (complex) | Very high | Medium |

| Use Case | Chat, trading, gaming | CRUD apps | Notifications, feeds |

Each approach serves different architectural needs. While REST remains ideal for standard CRUD operations, WebSockets are preferred for real-time bidirectional systems, and SSE works well for lightweight server-to-client streaming scenarios.

Building a WebSocket Node.js Backend for Production

A well-architected websocket node js backend ensures consistent performance across distributed environments. A production-ready WebSocket Node.js backend is more than just opening socket connections—it requires solid architecture and careful system design to ensure reliability and scalability under real-world load. A production-grade websocket node js backend must support distributed messaging and fault tolerance.

At scale, real-time systems must handle thousands to millions of active connections while maintaining low latency and reliable message delivery. This is where architectural decisions such as transport choice, session handling, and distributed messaging become critical.

A modern scalable websocket architecture is built around distributed components that work together to manage state, synchronize events, and balance traffic efficiently across multiple instances.

Socket.io vs native ws

For real-time communication, developers often choose between native WebSocket (ws) and Socket.io. The native approach is lightweight and performance-focused, while Socket.io adds higher-level features like automatic reconnection and fallback transports.

Socket.io provides a higher-level abstraction over WebSockets with built-in production features:

- Automatic reconnection handling,

- Fallback transport mechanisms (e.g., long polling),

- Event-based communication model,

- Easier handling of unstable network conditions.

It is commonly used in applications where developer experience and reliability are prioritized over raw performance.

Native WebSockect (ws)Native WebSocket (ws)

The ws library provides a lightweight and minimal implementation:

- Lower overhead and better raw performance,

- Full control over connection lifecycle,

- No built-in abstractions or fallback layers.

It is preferred in high-performance systems where fine-grained control and efficiency are critical.

Sticky sessions

WebSocket connections are long-lived and stateful, so load balancers must ensure that a client consistently connects to the same server instance. This can be achieved using sticky sessions (session affinity).

Sticky sessions ensure:

- Connection persistence to a single Node.js instance,

- Reduced risk of session disruption,

- Stable real-time communication flow.

Without sticky sessions, WebSocket connections may break or lose state when routed between different servers.

Redis Pub/Sub

In a distributed websocket Node.js backend, multiple server instances must share real-time events. Using node js redis pub sub, services can synchronize events across instances.

Redis Pub/Sub is commonly used to synchronize messages across nodes:

- One server publishes an event

- Redis distributes it to all subscribed instances

- Each instance forwards the event to its connected clients

This ensures that all users receive real-time updates regardless of which server they are connected to.

Redis acts as a lightweight, high-speed communication layer between WebSocket servers in a scalable websocket architecture.

Load balancing

Load balancing in WebSocket-based systems is more complex than in traditional HTTP applications due to persistent connections.

A proper load balancing strategy ensures:

- Even distribution of incoming connections across instances.

- Stability of long-lived WebSocket sessions.

- Reduced risk of server overload.

Common approaches include:

- Layer 4 (TCP) load balancing for performance efficiency.

- Layer 7 (HTTP) load balancing for application-aware routing.

- Sticky session support for connection consistency.

Combined with Redis and horizontal scaling, load balancing forms the foundation of a stable real-time system.

Together, these practices form a scalable real time architecture capable of handling enterprise workloads.

Event-Driven Backend Development with Node.js

At the core of modern distributed systems is event driven backend development, where services communicate asynchronously through events instead of direct API calls. Rather than relying on tightly coupled request/response flows, systems emit events like “user_created” or “order_updated”, which other services can subscribe to and handle independently in real time. This approach fits naturally with Node.js due to its non-blocking architecture and is widely used in real-time applications such as trading platforms and dashboards. This approach defines modern event driven backend development practices.

Pub/Sub model

The publish–subscribe (Pub/Sub) model is a foundational pattern in event-driven systems.

- A service publishes an event,

- Multiple services subscribe to that event,

- Each subscriber processes it independently.

This decouples services and allows systems to scale without tight dependencies between components.

Message brokers

To support large-scale event-driven systems, message brokers are used to manage event distribution.

Common technologies include:

- Apache Kafka for high-throughput event streaming,

- RabbitMQ for reliable message queuing,

- Redis Pub/Sub for lightweight real-time messaging.

These tools ensure that events are delivered reliably across distributed services.

Event streaming

Event streaming extends the Pub/Sub model by continuously processing streams of data rather than isolated messages.

In real-time systems, this allows:

- Continuous ingestion of data,

- Real-time analytics and transformations,

- Immediate propagation of updates across services.

Node.js plays a key role in processing these streams efficiently.

Streaming Backend with Node.js

A streaming backend Node.js architecture is designed to continuously process and deliver data in real time, rather than waiting for complete request cycles. This approach is essential for systems that rely on live updates, high-frequency data flows, or continuous event ingestion.

In some cases, node js server sent events provide a simpler alternative for unidirectional streaming.

Server-Sent Events

One of the simplest streaming approaches is Server-Sent Events (SSE), which enables a unidirectional channel where the server continuously pushes updates to the client over a persistent HTTP connection. It is lightweight, easy to implement, and well-suited for notifications, dashboards, and live feeds where client-to-server communication is minimal.

Kafka streaming

For more advanced and high-throughput systems, Kafka streaming is often used as the backbone of data pipelines. Kafka enables distributed, fault-tolerant streaming of large volumes of events across services, making it ideal for processing logs, financial transactions, and real-time analytics workloads.

In modern architectures, these tools are frequently combined with real-time data processing layers built on Node.js, allowing incoming streams to be transformed, filtered, and forwarded to downstream services or clients with minimal latency. This makes Node.js a strong fit for handling continuous data flows in scalable, event-driven environments.

Real-time data processing

In a streaming backend Node.js architecture, it acts as a processing layer that:

- Consumes incoming data streams,

- Filters and transforms events,

- Routes processed data to clients or downstream services.

This allows systems to maintain low latency while handling continuous, high-volume data flows.

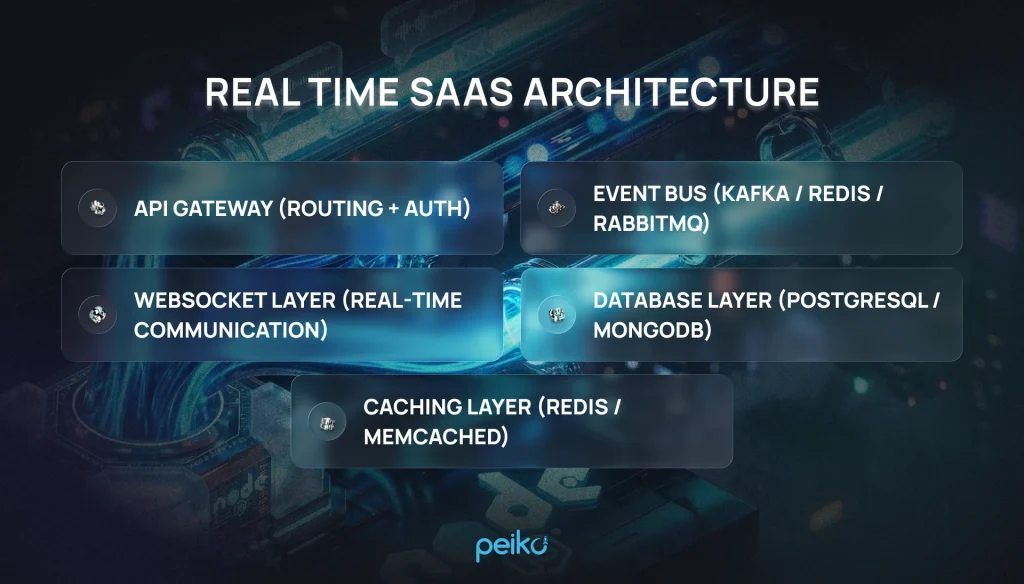

Real-Time SaaS Architecture Blueprint

A production-ready real time saas architecture typically includes:

This layered approach ensures separation of concerns and scalability.

API Gateway

The API Gateway acts as the entry point for all client requests. It is responsible for:

- Routing incoming traffic to appropriate services,

- Handling authentication and authorization,

- Enforcing rate limiting and security policies,

- Managing request validation and logging.

In real-time systems, the gateway also plays a key role in managing initial WebSocket connection upgrades and protecting backend services from overload.

WebSocket layer

The WebSocket layer is the core of real-time communication. It enables persistent, bi-directional connections between clients and the backend.

Key responsibilities include:

- Maintaining active client connections,

- Delivering real-time updates instantly,

- Handling connection lifecycle and state,

- Broadcasting events to subscribed users.

This layer is typically built with Node.js and scaled horizontally to support large numbers of concurrent users.

Event bus

The event bus enables asynchronous communication between services in a distributed system.

It is responsible for:

- Publishing and consuming events across services,

- Decoupling system components,

- Ensuring real-time data synchronization.

Common implementations include Redis Pub/Sub for lightweight messaging and Kafka for high-throughput event streaming.

Database layer

The database layer stores application data and ensures consistency across the system.

Typical setup includes:

- Relational databases (PostgreSQL) for structured data,

- NoSQL databases (MongoDB) for flexible, high-scale workloads,

- Time-series databases for analytics and event tracking.

This layer must be optimized for both real-time reads and high-frequency writes.

A well-designed real time saas architecture ensures low latency and high availability.

Your Recommended Tech Stack for Real-Time SaaS:

| Layer | Technology |

| API Gateway | Nginx / AWS API Gateway |

| WebSocket Layer | Node.js + ws / Socket.io |

| Event Bus | Kafka / Redis Pub/Sub |

| Database | PostgreSQL / MongoDB |

| Cache | Redis |

| Infrastructure | Kubernetes / Docker |

Scaling Real-Time Applications in the Cloud

Scaling a real-time application—especially one powered by a Node.js WebSocket server—isn’t about adding more servers. It requires thoughtful infrastructure design, smart resource management, and a clear strategy for handling thousands (or even millions) of simultaneous connections without performance drops.

To achieve this, teams typically rely on a few proven approaches.

First, horizontal scaling with Kubernetes that allows you to distribute traffic across multiple instances of your application. Instead of relying on a single server, you can dynamically spin up new containers as demand grows, ensuring the system remains responsive even during traffic spikes.

Another key component is using a shared layer like Redis. In a distributed environment, multiple WebSocket servers need to stay in sync—whether it’s user sessions, messages, or events. A Redis cluster enables fast communication between instances and keeps everything consistent in real time.

Equally important is designing your Node.js services to be stateless, meaning each request or connection can be handled independently—no relying on local memory. Stateless architecture makes scaling much easier, as any instance can handle any user at any time.

To keep everything running smoothly, you also need centralized logging and monitoring. Tools like Prometheus, Grafana, and OpenTelemetry give you deep visibility into system performance. They help track metrics, detect anomalies, and troubleshoot issues before they impact users.

Finally, modern real-time systems rely heavily on auto-scaling policies. By monitoring key metrics such as active connections, CPU usage, and memory consumption, your infrastructure can automatically scale based on demand. This ensures optimal performance while also keeping cloud costs under control.

When all these elements come together, you get a resilient, scalable WebSocket architecture that can handle heavy loads—delivering fast, reliable real-time experiences no matter how many users are connected.

Security in Real-Time Node.js Backends

Security is a core requirement for any enterprise-grade real-time application. When you’re dealing with persistent connections and continuous data flow, even small vulnerabilities can quickly turn into major risks. It’s important to protect each and every layer of the system.

One of the first steps is implementing authentication for WebSocket connections, typically using JSON Web Token (JWT). Tokens are validated during the initial handshake, ensuring that only authorized users can establish and maintain a connection.

From there, it’s essential to control how users interact with the system. Rate limiting—applied per user or IP—helps prevent abuse, protects against brute-force attempts, and keeps the system stable under load. At the infrastructure level, DDoS mitigation at the gateway or load balancer adds another layer of defense, filtering out malicious traffic before it reaches your application.

All communication should also be encrypted using WSS (WebSocket Secure) over TLS, which protects data in transit and prevents interception or tampering. This is especially critical for applications handling sensitive or financial data.

Finally, for many US-based SaaS companies, security goes beyond best practices—it’s a compliance requirement. Building a SOC 2–ready architecture ensures that your system meets strict standards for data security, availability, and confidentiality, which is often a must-have for enterprise clients.

When these measures are combined, they create a solid foundation that allows real-time systems to scale without compromising trust or safety.

Common Mistakes in Real-Time Backend Development

Building real-time systems can look straightforward at first, but many projects run into serious issues because of hidden architectural flaws. These problems often don’t show up early on—they appear later, when the system starts handling real users and higher loads.

One of the most common mistakes is relying on a single-instance WebSocket deployment. While it may work for a prototype, it quickly becomes a bottleneck in production, creating a single point of failure and limiting scalability.

Another frequent issue is skipping a proper messaging layer. Without a message broker like Redis or Apache Kafka, it becomes difficult to synchronize data across multiple services or instances. As your system grows, this lack of coordination leads to inconsistencies and performance problems.

Developers also sometimes overlook how blocking I/O operations in Node.js can impact performance. Since Node.js relies on an event-driven, non-blocking model, even a few blocking calls can slow down the entire system and degrade the real-time experience.

Another critical gap is the lack of observability and tracing. Without proper monitoring, logging, and distributed tracing, it’s nearly impossible to understand what’s happening inside your system—or to diagnose issues quickly when something goes wrong.

Avoiding these pitfalls is key to building reliable, scalable real-time applications. With the right architecture in place from the start, your Node.js backend can handle growth smoothly while maintaining performance and stability.

How We Help US Companies Build Real-Time Systems

Building real-time systems is no longer optional for competitive SaaS platforms—it is a foundational requirement.

We help US companies design and implement:

- real time backend development services

- enterprise-grade WebSocket infrastructure

- scalable event-driven platforms

- production-ready SaaS architectures

Whether you’re building a trading platform, analytics dashboard, or collaboration tool, we deliver enterprise real-time consulting and engineering execution.

We understand the requirements of US enterprises—from SOC 2 compliance to cloud-native architecture and distributed team collaboration—and design systems that meet them from day one.

Final Thoughts

Real-time application development with Node.js is a powerful approach. Your success depends on getting the architecture right from the start.

By combining WebSockets, event-driven design, streaming pipelines, and cloud-native infrastructure, you can build systems that are fast, scalable, and ready for real-world demand.

No comments yet. Be the first to comment!